|

| ANN * | NewANN (int n_inputs, int n_outputs) |

| | Create a new ANN. More...

|

| |

| int | DeleteANN (ANN *ann) |

| | Delete a neural network. More...

|

| |

| ANN * | LoadANN (char *filename) |

| | Load an ANN from a filename. More...

|

| |

| ANN * | LoadANN (FILE *f) |

| | Load the ANN from a C file handle. More...

|

| |

| int | SaveANN (ANN *ann, char *filename) |

| | Save the ANN to a filename. More...

|

| |

| int | SaveANN (ANN *ann, FILE *f) |

| | Save the ANN to a C file handle. More...

|

| |

| int | ANN_AddHiddenLayer (ANN *ann, int n_nodes) |

| | Add a hidden layer with n_nodes. More...

|

| |

| int | ANN_AddRBFHiddenLayer (ANN *ann, int n_nodes) |

| | Add an RBF layer with n_nodes. More...

|

| |

| int | ANN_Init (ANN *ann) |

| | Initialise neural network. More...

|

| |

| void | ANN_SetOutputsToTanH (ANN *ann) |

| | Set outputs to hyperbolic tangent. More...

|

| |

| void | ANN_SetOutputsToLinear (ANN *ann) |

| | Set outputs to linear. More...

|

| |

| void | ANN_SetLearningRate (ANN *ann, real a) |

| | Set the learning rate to a. More...

|

| |

| void | ANN_SetLambda (ANN *ann, real lambda) |

| | Set lambda, eligibility decay. More...

|

| |

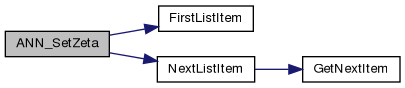

| void | ANN_SetZeta (ANN *ann, real lambda) |

| | Set zeta, parameter variance smoothing. More...

|

| |

| void | ANN_Reset (ANN *ann) |

| | Resets the eligbility traces and batch updates. More...

|

| |

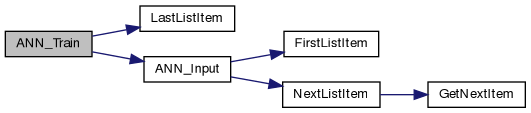

| real | ANN_Input (ANN *ann, real *x) |

| | Give an input vector to the neural network. More...

|

| |

| real | ANN_StochasticInput (ANN *ann, real *x) |

| | Stochastically generate an output, depending on parameter distributions. More...

|

| |

| real | ANN_Train (ANN *ann, real *x, real *t) |

| | Perform mean square error training, where the aim is to minimise the cost function \(\sum_i |f(x_i)-t_i|^2\), where \(x_i\) is input data, \(f(\cdot)\) is the mapping performed by the neural network, \(t_i\) is the desired output and \(i\) denotes the example index. More...

|

| |

| real | ANN_Delta_Train (ANN *ann, real *delta, real TD=0.0) |

| | Minimise a custom cost function. More...

|

| |

| void | ANN_SetBatchMode (ANN *ann, bool batch) |

| | Set batch updates. More...

|

| |

| void | ANN_BatchAdapt (ANN *ann) |

| | Adapt the parameters after a series of patterns has been seen. More...

|

| |

| real | ANN_Test (ANN *ann, real *x, real *t) |

| | Given an input and test pattern, return the MSE between the network's output and the test pattern. More...

|

| |

| real * | ANN_GetOutput (ANN *ann) |

| | Get the output for the current input. More...

|

| |

| real | ANN_GetError (ANN *ann) |

| | Get the error for the current input/output pair. More...

|

| |

| real * | ANN_GetErrorVector (ANN *ann) |

| | Return the error vector for pattern. More...

|

| |

| Layer * | ANN_AddLayer (ANN *ann, int n_inputs, int n_outputs, real *x) |

| | Low-level code to add a weighted sum layer. More...

|

| |

| Layer * | ANN_AddRBFLayer (ANN *ann, int n_inputs, int n_outputs, real *x) |

| | Low-level code to add an RBF layer. More...

|

| |

| void | ANN_FreeLayer (void *l) |

| | Free this layer - low level. More...

|

| |

| void | ANN_FreeLayer (Layer *l) |

| | Free this layer - low level. More...

|

| |

| void | ANN_CalculateLayerOutputs (Layer *current_layer, bool stochastic=false) |

| | Calculate layer outputs. More...

|

| |

| real | ANN_Backpropagate (LISTITEM *p, real *d, bool use_eligibility=false, real TD=0.0) |

| | d are the derivatives at the outputs. More...

|

| |

| void | ANN_RBFCalculateLayerOutputs (Layer *current_layer, bool stochastic=false) |

| | Calculate layer outputs. More...

|

| |

| real | ANN_RBFBackpropagate (LISTITEM *p, real *d, bool use_eligibility=false, real TD=0.0) |

| | Backpropagation for an RBF layer. More...

|

| |

| void | ANN_LayerBatchAdapt (Layer *l) |

| | Perform batch adaptation. More...

|

| |

| real | Exp (real x) |

| | Exponential hook. More...

|

| |

| real | Exp_d (real x) |

| | Exponential derivative hook. More...

|

| |

| real | htan (real x) |

| | Hyperbolic tangent hook. More...

|

| |

| real | htan_d (real x) |

| | Hyperbolic tangent derivative hook. More...

|

| |

| real | dtan (real x) |

| | Discrete htan hook. More...

|

| |

| real | dtan_d (real x) |

| | Discrete htan derivative hook. More...

|

| |

| real | linear (real x) |

| | linear hook More...

|

| |

| real | linear_d (real x) |

| | linear derivative hook More...

|

| |

| real | ANN_LayerShowWeights (Layer *l) |

| | Dump the weights of a particular layer on stdout. More...

|

| |

| real | ANN_ShowWeights (ANN *ann) |

| | Dump the weights on stdout. More...

|

| |

| void | ANN_ShowOutputs (ANN *ann) |

| | Dump outputs to stdout. More...

|

| |

| real | ANN_ShowInputs (ANN *ann) |

| | Dump inputs to all layers on stdout. More...

|

| |

| real | ANN_LayerShowInputs (Layer *l) |

| | Dump inputs to a particular layer on stdout. More...

|

| |

A neural network implementation.

A neural network is a parametric function composed of a number of 'layers'. Each layer can be expressed as a function \(g(y) =g(\sum_i w_i f_i (x))\), where the \(w\) are a set of weights and \(f(\cdot)\) is a set of basis functions. The basis functions can be fixed or they can be another layer. The neural network can be adapted to minimise some cost criterion \(C\) (defined on some data) via gradient descent. The gradient of the cost with respect to the data is \(\partial C/\partial x\). By expanding this with the chain rule, we have: \(\partial C/\partial x = \partial g/\partial y \partial y/\partial w \partial w/\partial x\).

Definition in file ANN.h.