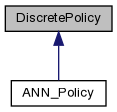

Discrete policies with reinforcement learning. More...

#include <policy.h>

Public Member Functions | |

| DiscretePolicy (int n_states, int n_actions, real alpha=0.1, real gamma=0.8, real lambda=0.8, bool softmax=false, real randomness=0.1, real init_eval=0.0) | |

| Create a new discrete policy. More... | |

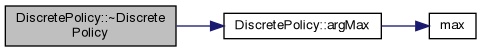

| virtual | ~DiscretePolicy () |

| Kill the agent and free everything. More... | |

| virtual void | setLearningRate (real alpha) |

| Set the learning rate. More... | |

| virtual real | getTDError () |

| Get the temporal difference error of the previous action. More... | |

| virtual real | getLastActionValue () |

| Get the vale of the last action taken. More... | |

| virtual int | SelectAction (int s, real r, int forced_a=-1) |

| Select an action a, given state s and reward from previous action. More... | |

| virtual void | Reset () |

| Use at the end of every episode, after agent has entered the absorbing state. More... | |

| virtual void | loadFile (char *f) |

| Load policy from a file. More... | |

| virtual void | saveFile (char *f) |

| Save policy to a file. More... | |

| virtual void | setQLearning () |

| Set the algorithm to QLearning mode. More... | |

| virtual void | setELearning () |

| Set the algorithm to ELearning mode. More... | |

| virtual void | setSarsa () |

| Set the algorithm to SARSA mode. More... | |

| virtual bool | useConfidenceEstimates (bool confidence, real zeta=0.01, bool confidence_eligibility=false) |

| Set to use confidence estimates for action selection, with variance smoothing zeta. More... | |

| virtual void | setForcedLearning (bool forced) |

| Set forced learning (force-feed actions) More... | |

| virtual void | setRandomness (real epsilon) |

| Set randomness for action selection. Does not affect confidence mode. More... | |

| virtual void | setGamma (real gamma) |

| Set the gamma of the sum to be maximised. More... | |

| virtual void | setPursuit (bool pursuit) |

| Use Pursuit for action selection. More... | |

| virtual void | setReplacingTraces (bool replacing) |

| Use Pursuit for action selection. More... | |

| virtual void | useSoftmax (bool softmax) |

| Set action selection to softmax. More... | |

| virtual void | setConfidenceDistribution (enum ConfidenceDistribution cd) |

| Set the distribution for direct action sampling. More... | |

| virtual void | useGibbsConfidence (bool gibbs) |

| Add Gibbs sampling for confidences. More... | |

| virtual void | useReliabilityEstimate (bool ri) |

| Use the reliability estimate method for action selection. More... | |

| virtual void | saveState (FILE *f) |

| Save the current evaluations in text format to a file. More... | |

Protected Member Functions | |

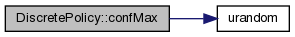

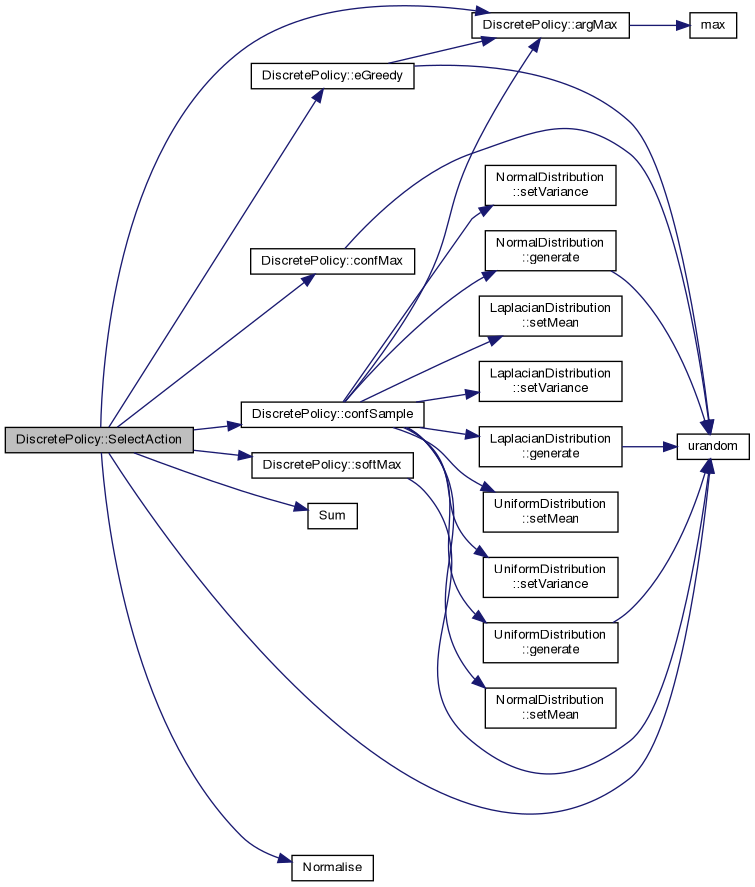

| int | confMax (real *Qs, real *vQs, real p=1.0) |

| Confidence-based Gibbs sampling. More... | |

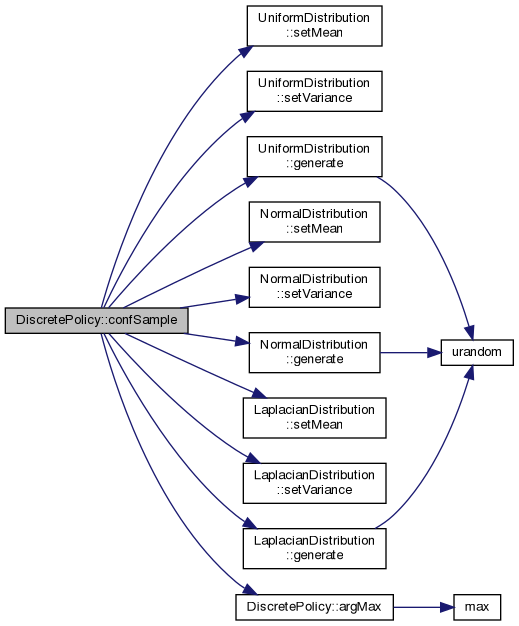

| int | confSample (real *Qs, real *vQs) |

| Directly sample from action value distribution. More... | |

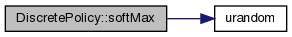

| int | softMax (real *Qs) |

| Softmax Gibbs sampling. More... | |

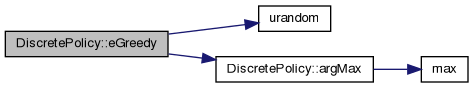

| int | eGreedy (real *Qs) |

| e-greedy sampling More... | |

| int | argMax (real *Qs) |

| Get ID of maximum action. More... | |

Protected Attributes | |

| enum LearningMethod | learning_method |

| learning method to use; More... | |

| int | n_states |

| number of states More... | |

| int | n_actions |

| number of actions More... | |

| real ** | Q |

| state-action evaluation More... | |

| real ** | e |

| eligibility trace More... | |

| real * | eval |

| evaluation of current aciton More... | |

| real * | sample |

| sampling output More... | |

| real | pQ |

| previous Q More... | |

| int | ps |

| previous state More... | |

| int | pa |

| previous action More... | |

| real | r |

| reward More... | |

| real | temp |

| scratch More... | |

| real | tdError |

| temporal difference error More... | |

| bool | smax |

| softmax option More... | |

| bool | pursuit |

| pursuit option More... | |

| real ** | P |

| pursuit action probabilities More... | |

| real | gamma |

| Future discount parameter. More... | |

| real | lambda |

| Eligibility trace decay. More... | |

| real | alpha |

| learning rate More... | |

| real | expected_r |

| Expected reward. More... | |

| real | expected_V |

| Expected state return. More... | |

| int | n_samples |

| number of samples for above expected r and V More... | |

| int | min_el_state |

| min state ID to search for eligibility More... | |

| int | max_el_state |

| max state ID to search for eligibility More... | |

| bool | replacing_traces |

| Replacing instead of accumulating traces. More... | |

| bool | forced_learning |

| Force agent to take supplied action. More... | |

| bool | confidence |

| Confidence estimates option. More... | |

| bool | confidence_eligibility |

| Apply eligibility traces to confidence. More... | |

| bool | reliability_estimate |

| reliability estimates option More... | |

| enum ConfidenceDistribution | confidence_distribution |

| Distribution to use for confidence sampling. More... | |

| bool | confidence_uses_gibbs |

| Additional gibbs sampling for confidence. More... | |

| real | zeta |

| Confidence smoothing. More... | |

| real ** | vQ |

| variance estimate for Q More... | |

Discrete policies with reinforcement learning.

This class implements a discrete policy using the Sarsa( \(\lambda\)) or Q( \(\lambda\)) Reinforcement Learning algorithm. After creating an instance of the algorithm were the number of actions and states are specified, you can call the SelectAction() method at every time step to dynamically select an action according to the given state. At the same time you must provide a reinforcement, which should be large (or positive) for when the algorithm is doing well, and small (or negative) when it is not. The algorithm will try and maximise the total reward received.

Parameters:

n_states: The number of discrete states, or situations, that the agent is in. You should create states that are relevant to the task.

n_actions: The number of different things the agent can do at every state. Currently we assume that all actions are usable at all states. However if an action a_i is not usable in some state s, then you can make the client side of the code for state s map state a_i to some usable state a_j, meaning that when the agent selects action a_i in state s, the result would be that of taking one of the usable actions. This will make the two actions equivalent. Alternatively when the agent selects an unusable action at a particular state, you could have a random outcome of actions. The algorithm can take care of that, since it assumes no relationship between the same action at different states.

alpha: Learning rate. Controls how fast the evaluation is changed at every time step. Good values are between 0.01 and 0.1. Lower than 0.01 makes learning too slow, higher than 0.1 makes learning unstable, particularly for high gamma and lambda.

gamma: The algorithm will maximise the exponentially decaying sum of all future rewards, were the base of the exponent is gamma. If gamma=0, then the algorithm will always favour short-term rewards over long-term ones. Setting gamma close to 1 will make long-term rewards more important.

lambda: This controls how much the expected value of reinforcement is close to the observed value of reinforcement. Another view is that of it controlling the eligibility traces e. Eligibility traces perform temporal credit assignment to previous actions/states. High values of lambda (near 1) can speed up learning. With lambda=0, only the currently selected action/state pair evaluation is updated. With lambda>0 all state/action pair evaluations taken in the past are updated, with most recent pairs updated more than pairs further in the past. Ultimately the optimal value of lambda will depend on the task.

softmax, randomness: If this is false, then the algorithm selects the best possible action all the time, but with probability 'randomness' selects a completely random action at each timestep. If this is true, then the algorithm selects actions stochastically, with probability of selecting each action proportional to how better it seems to be than the others. A high randomness (>10.0) will create more or less equal probabilities for actions, while a low randomness (<0.1) will make the system almost deterministic.

init_eval: This is the initial evaluation for actions. It pays to set this to optimistic values (i.e. higher than the reinforcement to be given), so that the algorithm becomes always 'disappointed' and tries to explore as much of the possible combinations as it can.

Member functions:

setQLearning(): Use the Q( \(\lambda\)) algorithm

setELearning(): Use the E( \(\lambda\)) algorithm

setSarsa(): Use the Sarsa( \(\lambda\)) algorithm

All the above algorithms attempt to approximate the optimal value function by using an update of the form

\[ Q_{t+1}(s,a) = Q_{t}(s,a) + \alpha (r_{t+1} + \gamma E\{Q(s'|\pi)\} - Q_{t}(s,a)). \]

The difference lies in how the expectation operator is approximated. In the case of SARSA, it is done by directly sampling the current policy, i.e. the expected value of Q for the next state is the current evaluation for the action actually taken in the next state. In Q-learning the expected value is that of the greedy action in the next state (with some minor complications to accommodate eligibility traces). E-learning is the most general case. The simplest implementation works by just replacing the single sample from Q with \(\sum_{b} Q(s',b) P(a'=b|s')\). This lies somewhere in between SARSA and Q-learning.

setPursuit (bool pursuit): If true, use pursuit methods to determine the best possible action. This enforces maximum exploration initially, and maximum exploitation of estimates later. I am not sure of the convergence properties of this method, however, when used in conjuction with Sarsa or Q-learning.

useConfidenceEstimates(bool confidence, float zeta): Use confidence estimates for the estimated parameters. This allows automatic exploration-exploitation tradeoffs. The zeta parameter controls how smooth the estimates of the confidence are, lower values for smoother. (defaults to 0.01). Now, given estimates Q_1 and Q_2 for actions 1,2 respectively we assume a Laplacian distribution centered around Q_1 and Q_2, with variance equal to v_1 and v_2. With Gibbs sampling, the probability of selecting action Q_1 is then

\[ P[1]=exp(Q_1/\sqrt{v_1})/(exp(Q_1/\sqrt{v_1})+exp(Q_2/\sqrt{v2})) \]

.

However it is possible to perform direct sampling.

| DiscretePolicy::DiscretePolicy | ( | int | n_states, |

| int | n_actions, | ||

| real | alpha = 0.1, |

||

| real | gamma = 0.8, |

||

| real | lambda = 0.8, |

||

| bool | softmax = false, |

||

| real | randomness = 0.1, |

||

| real | init_eval = 0.0 |

||

| ) |

Create a new discrete policy.

Definition at line 42 of file policy.cpp.

|

virtual |

Kill the agent and free everything.

Delete policy.

Definition at line 155 of file policy.cpp.

|

protected |

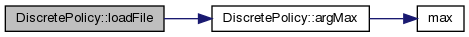

Get ID of maximum action.

Definition at line 816 of file policy.cpp.

Confidence-based Gibbs sampling.

Definition at line 715 of file policy.cpp.

Directly sample from action value distribution.

Definition at line 749 of file policy.cpp.

|

protected |

e-greedy sampling

Definition at line 802 of file policy.cpp.

|

inlinevirtual |

Get the vale of the last action taken.

Reimplemented in ANN_Policy.

|

inlinevirtual |

|

virtual |

Load policy from a file.

Definition at line 484 of file policy.cpp.

|

virtual |

Use at the end of every episode, after agent has entered the absorbing state.

Reimplemented in ANN_Policy.

Definition at line 474 of file policy.cpp.

|

virtual |

Save policy to a file.

Definition at line 550 of file policy.cpp.

|

virtual |

Save the current evaluations in text format to a file.

The values are saved as triplets (Q, P, vQ). The columns are ordered by actions and the rows by state number.

Definition at line 128 of file policy.cpp.

|

virtual |

Select an action a, given state s and reward from previous action.

Optional argument a forces an action if setForcedLearning() has been called with true.

Two algorithms are implemented, both of which converge. One of them calculates the value of the current policy, while the other that of the optimal policy.

Sarsa ( \(\lambda\)) algorithmic description:

\[ Q_{t}(s,a) = Q_{t-1}(s,a) + \alpha \delta e_{t}(s,a), \]

where \(e_{t}(s,a) = \gamma \lambda e_{t-1}(s,a)\)end

Watkins Q (l) algorithmic description:

\[ Q(s,a) = Q(s,a)+\alpha \delta e(s,a) \]

if \((a'=a*)\) then \(e(s,a)\) = \(\gamma \lambda e(s,a)\) else \(e(s,a) = 0\) endThe most general algorithm is E-learning, currently under development, which is defined as follows:

\[ Q(s,a) = Q(s,a)+\alpha \delta e(s,a) \]

\(e(s,a)\) = \(\gamma \lambda e(s,a) P(a|s,\pi) \)Note that we also cut off the eligibility traces that have fallen below 0.1

Definition at line 283 of file policy.cpp.

|

virtual |

Set the distribution for direct action sampling.

Definition at line 684 of file policy.cpp.

|

virtual |

Set the algorithm to ELearning mode.

Definition at line 604 of file policy.cpp.

|

virtual |

Set forced learning (force-feed actions)

Definition at line 639 of file policy.cpp.

|

virtual |

Set the gamma of the sum to be maximised.

Definition at line 656 of file policy.cpp.

|

inlinevirtual |

|

virtual |

Use Pursuit for action selection.

Definition at line 618 of file policy.cpp.

|

virtual |

Set the algorithm to QLearning mode.

Definition at line 598 of file policy.cpp.

|

virtual |

Set randomness for action selection. Does not affect confidence mode.

Definition at line 645 of file policy.cpp.

|

virtual |

Use Pursuit for action selection.

Definition at line 629 of file policy.cpp.

|

virtual |

Set the algorithm to SARSA mode.

A unified framework for action selection.

Definition at line 611 of file policy.cpp.

|

protected |

Softmax Gibbs sampling.

Definition at line 783 of file policy.cpp.

|

virtual |

Set to use confidence estimates for action selection, with variance smoothing zeta.

Variance smoothing currently uses a very simple method to estimate the variance.

Definition at line 580 of file policy.cpp.

|

virtual |

Add Gibbs sampling for confidences.

This can be used in conjuction with any confidence distribution, however it mostly makes sense for SINGULAR.

Definition at line 704 of file policy.cpp.

|

virtual |

Use the reliability estimate method for action selection.

Definition at line 673 of file policy.cpp.

|

virtual |

Set action selection to softmax.

Definition at line 662 of file policy.cpp.

|

protected |

|

protected |

Distribution to use for confidence sampling.

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |

|

protected |